Vibe Coding and Technical Debt: What the Data Shows

Your team is shipping faster than ever — and that might be your biggest engineering risk right now.

AI-assisted development has changed the economics of software creation overnight. What used to take a senior engineer two days now takes two hours. Velocity metrics look great. Demos impress stakeholders. Sprints close on time. But underneath that productivity surface, something else is accumulating — quietly, steadily, and at scale.

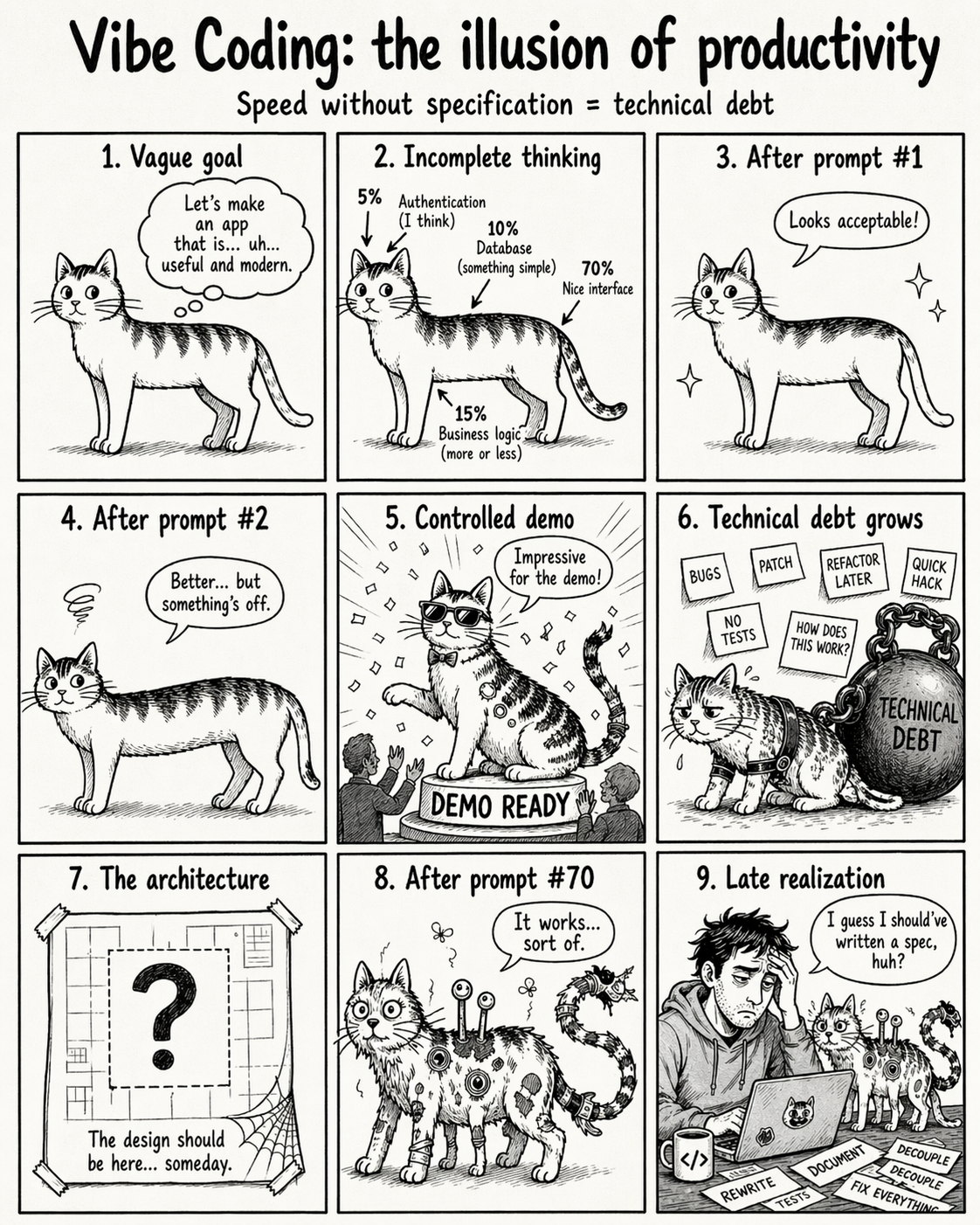

This is the real problem with vibe coding: not that it makes writing code easier, but that it makes it dangerously easy to look like you’re doing engineering when you’re actually just producing output.

What “Vibe Coding” Actually Means — and Why It Matters for Engineering Leaders

Vibe coding refers to a development style where engineers work conversationally with AI: describe what you want, iterate through prompts, correct by approximation, and move fast. Even Google’s developer documentation frames it as a way to make development more accessible. The promise is real. The risk is in the misuse.

Here’s the analogy that engineering leaders need to internalize: using AutoCAD doesn’t make someone an architect. Using Photoshop doesn’t make someone a designer. And using AI-assisted code generation doesn’t make someone a good software engineer. Tools amplify capability — they don’t replace the underlying expertise required to govern complex systems over time.

AI reduces the friction of writing code. It does not eliminate the difficulty of understanding dependencies, reasoning about architecture, managing complexity, designing for observability, protecting secrets, ensuring traceability, integrating security, validating edge cases, or maintaining a system that lives in production for years. The asymmetry is critical: AI reduces the cost of creation, but not the cost of governance.

The Data Engineering Leaders Can’t Ignore

This isn’t a philosophical concern. The numbers are already showing up in the research, and they should inform your AI adoption strategy.

DORA Report 2024: AI Adoption Correlated with Lower Throughput and Stability

The 2024 DORA report found that higher AI adoption was associated with a decline in delivery throughput and a reduction in system stability. The tools promised acceleration. The data shows friction — just delayed and less visible.

Stack Overflow Developer Survey: Only 43% Trust AI Output Accuracy

Despite widespread adoption, only 43% of developers say they trust the accuracy of AI-generated code. When that skepticism doesn’t translate into review rigor, it becomes a liability buried in your codebase.

GitClear 2025: The Refactoring Collapse

- Copy/pasted lines increased from 8.66% in 2021 to 12.32% in 2024

- “Moved” lines — a proxy for refactoring — dropped from 24.65% to 9.47%

- Cloned code increased by 4x

Healthy software doesn’t just add things. It reorganizes, abstracts, simplifies, consolidates, and eliminates redundancy. When teams optimize for generation speed over comprehension depth, what’s expanding isn’t capacity — it’s maintenance surface.

The Security Dimension: This Is Not Theoretical

The Linux Kernel maintainers have already issued guidance for AI-assisted contributions: do not allow an agent to add Signed-off-by tags, use Assisted-by for proper traceability, and assume full human responsibility for every line submitted.

Anthropic’s Project Mythos demonstrated that AI agents can autonomously identify thousands of zero-day vulnerabilities across operating systems and browsers, and independently exploit them — including a documented case involving FreeBSD. When teams deploy AI-generated code into systems they don’t fully observe, they expand their attack surface in ways traditional security reviews aren’t designed to catch.

The Productivity Illusion in Practice

The pattern typically looks like this: velocity metrics improve short-term. Developers feel productive. Leadership sees faster delivery and concludes the AI investment is paying off. What doesn’t show up immediately: duplication that creates inconsistency bugs three sprints later. Authentication logic that fails at the boundary. Observability gaps that slow incident response. Architecture decisions that made sense for the prompt but not for the system.

By the time these costs materialize, the causal link to the generation tooling is invisible. It looks like normal engineering entropy. This is how technical debt accumulates in the age of AI: not dramatically, but incrementally, through thousands of small decisions where the cost of writing was zero and the cost of understanding was deferred.

A Practical Framework for Engineering Leaders

1. Use AI for Exploration, Not Abdication

AI-generated code is an excellent first draft. It is a poor substitute for architectural judgment. The first version can come from the model. The structure, the contracts, and the design decisions cannot.

2. Define Risk Zones and Apply Them Consistently

- Lower risk: prototypes, internal utilities, test fixtures, non-critical tooling

- Higher risk: authentication, payments, permissions, infrastructure, data pipelines, public APIs

High-risk zones require human-authored architecture, line-level review, and explicit sign-off. Make this policy explicit, not implicit.

3. Require Traceability

Your codebase needs to know what was generated, by which model, what was reviewed, and what was modified by a human. Without traceability, you cannot reason about your risk exposure.

4. Measure What Actually Matters

Stop optimizing for velocity alone. Track: deployment stability, post-release defect rate, incident recovery time, code duplication trends, and rework frequency. If velocity is up but stability is down, you’re not ahead — you’re borrowing against future capacity.

5. Four Questions Before Merging AI-Generated Code

- Who actually understands this code?

- How is it tested — including edge cases?

- How will it be observed in production?

- What is the failure plan when it breaks?

If the team can’t answer all four, the code isn’t ready to merge. This isn’t gatekeeping — it’s the minimum standard for code that will run in production systems your customers depend on.

The Craft Is More Valuable Now, Not Less

The conclusion many organizations draw from AI coding tools is that engineering expertise matters less. The opposite is true. When writing code was expensive, the cost forced deliberation. Now that writing code is cheap, deliberation has to be a conscious discipline — not an economic byproduct.

The engineers who will create the most durable value in the next decade aren’t the ones who can prompt the fastest. They’re the ones who can govern what gets built: who understand systems, own decisions, and take responsibility for what runs in production.

Vibe coding is a tool. Engineering judgment is the craft. Don’t confuse the two — and don’t let your organization’s metrics reward the tool at the expense of the craft.