The Analyst’s Comeback: Why AI Made Software Analysis More Important

For years, many companies treated software analysis as an uncomfortable stage: necessary, but slow; important, but barely visible; useful, but less celebrated than development.

In many organizations, the protagonist was “the team that builds.” The analyst was reduced to someone who gathers requirements, documents meetings, translates what the business says, and prepares user stories so others can do “the important part”: coding.

That vision is becoming outdated.

Artificial intelligence is not eliminating the need for analysis. It is doing exactly the opposite: it is showing, with brutal clarity, which organizations know what they want to build and which ones are simply generating code faster than before.

And between those two categories there is an enormous difference. It is not measured in lines of code. It is measured in technical debt, rewrites, rework, cost overruns, and lost business opportunities.

The Pandemic, the Bubble, and the Reset

The pandemic accelerated a transformation that was already underway. In 2020, digitalization stopped being a long-term strategic project and became a survival urgency. McKinsey documented that companies accelerated the digitalization of their customer interactions, supply chains, and internal operations by three to four years; and that the share of digital or digitally enabled products advanced, on average, seven years.

That leap drove demand for technology talent. Companies that had previously postponed their digital projects began building e-commerce, service channels, automations, internal applications, data analytics, self-service systems, integrations, and operating platforms.

The market did not have enough developers, architects, testers, product owners, or technical leaders to absorb that demand.

And then another phenomenon appeared: nearshoring.

For many U.S. companies, Latin America became a natural alternative: talent in a nearby time zone, competitive costs compared to the U.S. market, and a growing base of software engineers. Deloitte has noted that Latin America represents an attractive opportunity to acquire and scale technology talent through nearshore models.

But for local Latin American companies, this had a painful side effect: they began competing for talent against companies that billed in dollars.

Many salaries inflated. Many profiles became difficult to retain. Many local companies did not lose talent because of culture, leadership, or purpose. They lost it because they simply could not match the economic conditions of a foreign company.

For a time, it seemed the market would never correct itself. But bubbles also exist in technology.

Since 2022, the sector has experienced a sustained wave of layoffs. Crunchbase records around 127,000 workers laid off at U.S.-based technology companies during 2025, and TrueUp reports more than 245,000 people impacted globally that same year.

This is not the end of software. It is the end of a stage of disorganized growth, excessive hiring, and blind faith that more developers automatically equaled more value.

The Second Shake: Vibe Coding

Now comes a second shake: artificial intelligence applied to development.

Tools such as GitHub Copilot, Claude Code, Cursor, ChatGPT, Gemini, and others have changed the way software is built. Microsoft Research documented that, in a controlled experiment, developers with access to GitHub Copilot completed a task 55.8% faster than the group without Copilot.

That data point is important, but incomplete.

Because one thing is completing an isolated task faster, and something very different is building a maintainable, secure, scalable enterprise system aligned with a business strategy.

This is where the concept of vibe coding appears: a form of development in which a person describes in natural language what they want and an AI generates, adjusts, or debugs code. Collins Dictionary chose it as the word of the year 2025 and defined it as a practice where AI converts natural language into code.

This democratizes software creation. It also multiplies risk.

Today a founder can create a prototype. A business team can put together an internal tool. A junior developer can move faster. A senior can automate repetitive tasks. All of that is positive.

The problem starts when we confuse “it works in a demo” with “it is ready to run a business.”

An application can work and still be poorly designed. It can have an attractive interface and still have a fragile architecture. It can solve an immediate use case and still not support growth, auditing, security, integrations, traceability, regulatory changes, or product evolution.

It can be fast to build and extremely expensive to maintain.

And this is already showing up in the data.

Google Cloud’s DORA report found that AI adoption improves individual productivity and developer satisfaction, but can also negatively affect delivery performance: a 25% increase in AI adoption was associated with a 1.5% decrease in delivery throughput and a 7.2% decrease in delivery stability.

METR, in a controlled trial in 2025, found an even more counterintuitive result: experienced developers working on familiar repositories were 19% slower using AI tools, even though they themselves believed they had been faster.

GitClear, from another perspective, analyzed 211 million modified lines between 2020 and 2024 and found that lines associated with refactoring fell from 25% in 2021 to less than 10% in 2024, while lines classified as copied or pasted rose from 8.3% to 12.3%.

The reading is not “AI is bad.”

The correct reading is this: AI amplifies the system you already have.

If you have clarity, it amplifies clarity. If you have chaos, it amplifies chaos. If you have good analysis, it accelerates construction. If you have bad requirements, it accelerates rework.

Why the Analyst Returns to the Center

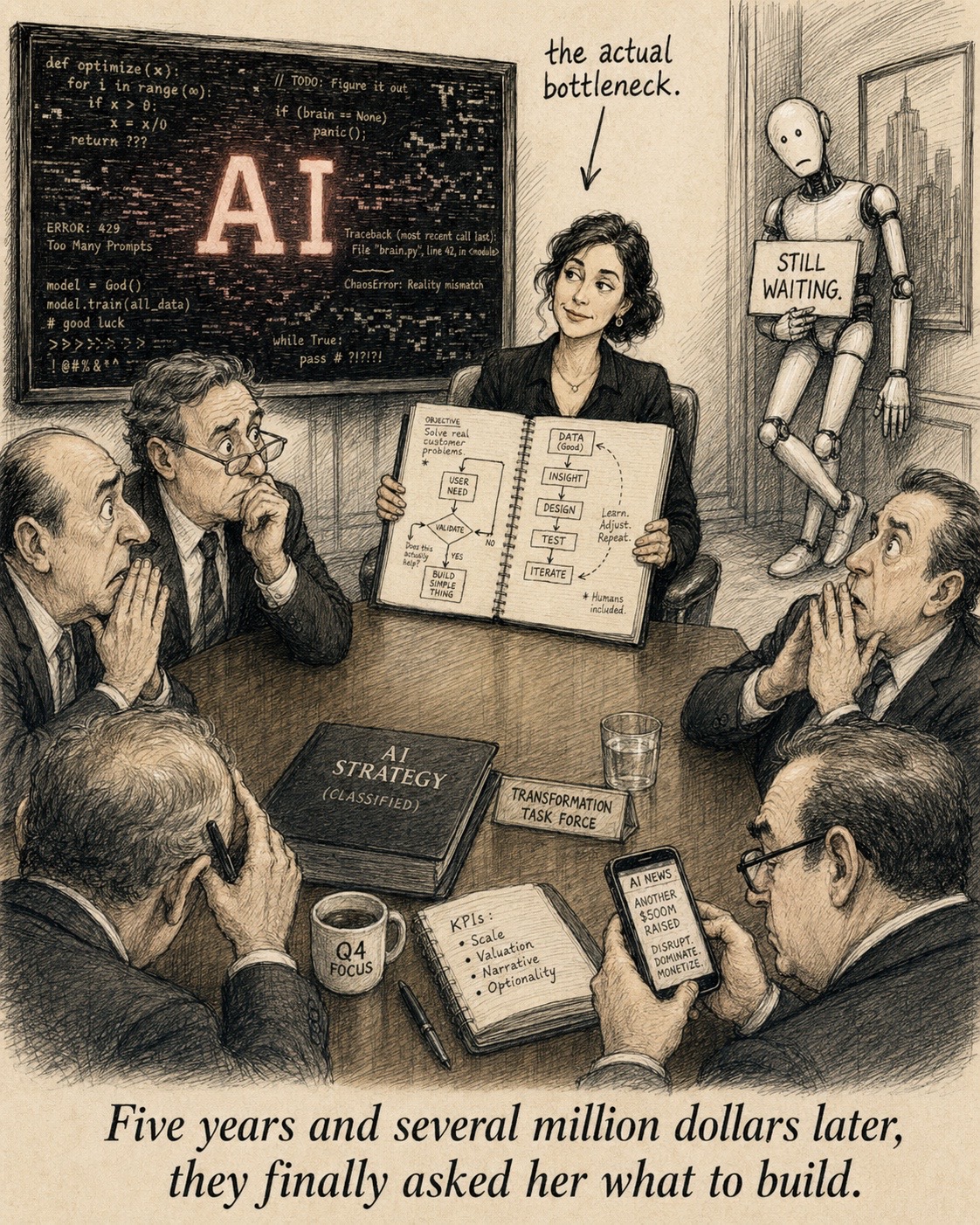

The new bottleneck is not writing code.

The new bottleneck is correctly defining what must exist, why it must exist, for whom, under what rules, with what constraints, with what dependencies, with what data, with what integrations, with what risks, and with what success criteria.

Andrew Lau, CEO of Jellyfish, said it in an interview with McKinsey: for decades we thought that coding was the hard part; it turns out that describing what to build is harder.

That sentence should be framed in every technology office.

Software analysis can no longer be seen as documentation prior to development. It must be seen as the architecture of product thinking.

A good analyst does not only ask: “what field does the form need?” They ask: What business decision does this field enable? Who captures it? Who validates it? What happens if it is empty? What system consumes it? What process breaks if it changes? What indicator improves? What legal, operational, or commercial risk appears? What experience will the user have? What part should be automated and what part requires human intervention?

In the era of AI, that clarity becomes code faster. So does ambiguity.

Therefore, modern analysis must evolve toward a hybrid discipline: business, product, architecture, data, user experience, security, automation, and prompt engineering.

The Analyst Must Also Learn Prompt Engineering

But not as a trend. Not as a trick to ask pretty things from an AI.

Prompt engineering must be understood as a new way of converting requirements into executable, verifiable, and reusable instructions.

Anthropic recommends working with clarity, examples, structure, guided thinking, and agentic systems to obtain better results with Claude. Microsoft, in its guide for Azure OpenAI, insists on providing context, using supporting data, and bringing source material closer to the expected result to reduce errors.

This changes the way of thinking about a user story. A good story should no longer end only in acceptance criteria. It should be able to become an implementation prompt, a testing prompt, a security review prompt, a documentation prompt, and a functional validation prompt.

There is the leap.

The analyst of the past documented what the user asked for. The analyst of the present designs the context so that humans and AI build correctly. The analyst of the future will be, in large part, a context engineer.

Anthropic already speaks of context engineering as the natural evolution of prompt engineering: it is not just about writing an instruction, but about curating and maintaining the right set of information available so that a model produces consistent results.

That idea is deeply relevant to software development. Because an AI does not only need an order. It needs context: domain, rules, architecture, constraints, data, standards, prior decisions, and definition of success.

A modern software factory should operate like this: first analysis, then design, then context, then assisted generation, then validation, then automation, then delivery. Not the other way around.

The New Question for CEOs, CIOs, and CTOs

The mistake of many companies will be believing they can skip analysis because they now have AI.

The success of the best will be understanding that AI makes analysis more profitable than ever.

Because a poorly defined requirement used to generate an extra meeting, a sprint correction, or a scope deviation. Today it can generate hundreds of lines of incorrect code, superficial tests, misleading documentation, and a false sense of progress.

AI does not eliminate rework. It industrializes it if there is no criterion.

Therefore, CEOs, CIOs, CTOs, and founders should stop asking only: “how many developers do I need?” The right question is: how well are we converting our business decisions into buildable systems?

That is the real competitive advantage.

The company that wins is not the one that produces the most code. It is the one that generates the least technical waste to reach the same business result.

The company that wins is not the one that launches a demo the fastest. It is the one that can evolve its product without rewriting it every six months.

The company that wins is not the one that replaces analysis with AI. It is the one that uses AI on a solid foundation of analysis.

The Starting Point of All Good Engineering

At VELAIO we believe precisely in that.

We do not understand software engineering as a frantic race to produce screens, endpoints, or lines of code. For us, the main input of a true software factory is good analysis: serious requirements gathering, business understanding, functional design, architecture, prioritization, acceptance criteria, data clarity, traceability, and product vision.

When that work is done well, AI and automation are not dangerous shortcuts. They become controlled accelerators.

They allow building faster, reducing rework, improving delivery quality, and focusing the technical team on higher-value decisions.

Our approach seeks to deliver solid, better-designed solutions with shorter rework cycles, achieving in well-defined scenarios time reductions of 30% to 45% compared to traditional software factory models.

Not because we run faster without direction. But because we avoid running twice down the same path.

The new era of software does not belong to whoever generates the most code. It belongs to whoever best understands what must be built. And in that new era, the analyst is not a preliminary step before development. The analyst is the starting point of all good engineering.